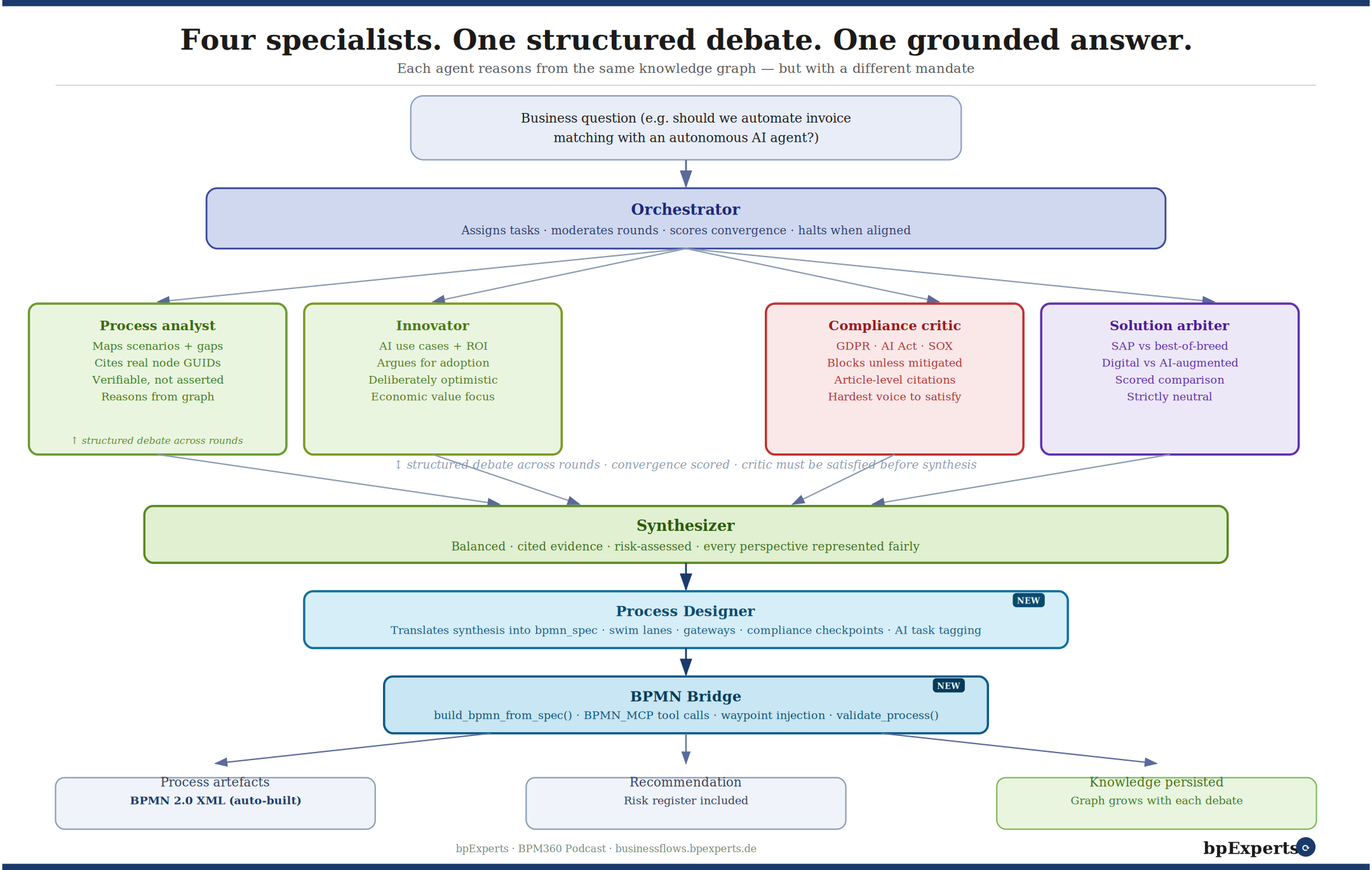

The updated debate architecture — with Process Designer and BPMN Bridge added as new execution steps between synthesis and output.

In our last article we described a brain that reasons. Four specialist agents — process analyst, innovator, compliance critic, solution arbiter — debate a business question, ground their positions in the knowledge graph, and converge on a synthesis that represents every perspective fairly. The process designer then translates that synthesis into formal process artefacts: BPMN structures, SIPOC tables, Turtle diagrams.

The question we left open was: what happens after the design is agreed?

Previously, the answer was: a human takes the artefacts and builds the process model. The BPMN was described but not constructed. The recommendation was documented but not visualised. The engagement produced intelligence — but intelligence still needed to be converted into deliverables by hand.

We closed that gap. This article describes how.

What changed: two new components

The architecture now has two additional components that connect the debate output directly to production-ready deliverables.

The BPMN Builder — built on the BPMN_MCP server — is a stateful tool service that constructs valid BPMN 2.0 XML from structured instructions. It knows how to create pools, lanes, events, tasks, gateways, and sequence flows. It enforces dependency ordering — lanes before elements, elements before flows — and validates the result against the BPMN 2.0 standard before emitting XML. It does not reason. It constructs. That is the right division of labour.

The BPMN Bridge connects the debate pipeline to the BPMN Builder. It reads the bpmn_spec block that the process designer agent now emits as part of its synthesis, and orchestrates the sequence of tool calls required to build the complete process model. It handles the translation from semantic intent — swim lanes named after business roles, tasks typed as human or automated, gateways labelled with decision logic — into the precise API calls that produce standards-compliant XML.

The result is that a business question entered into the debate pipeline now produces, without any additional human effort:

A structured recommendation with a risk register, grounded in the knowledge graph

A BPMN 2.0 process model, validated and ready to import into Signavio, ARIS, Camunda, or SAP Cloud ALM

A corporate-branded PowerPoint presentation, built from the bpExperts template, with all slides populated from the debate output

A live interactive process viewer, renderable directly in a browser or embeddable in a website

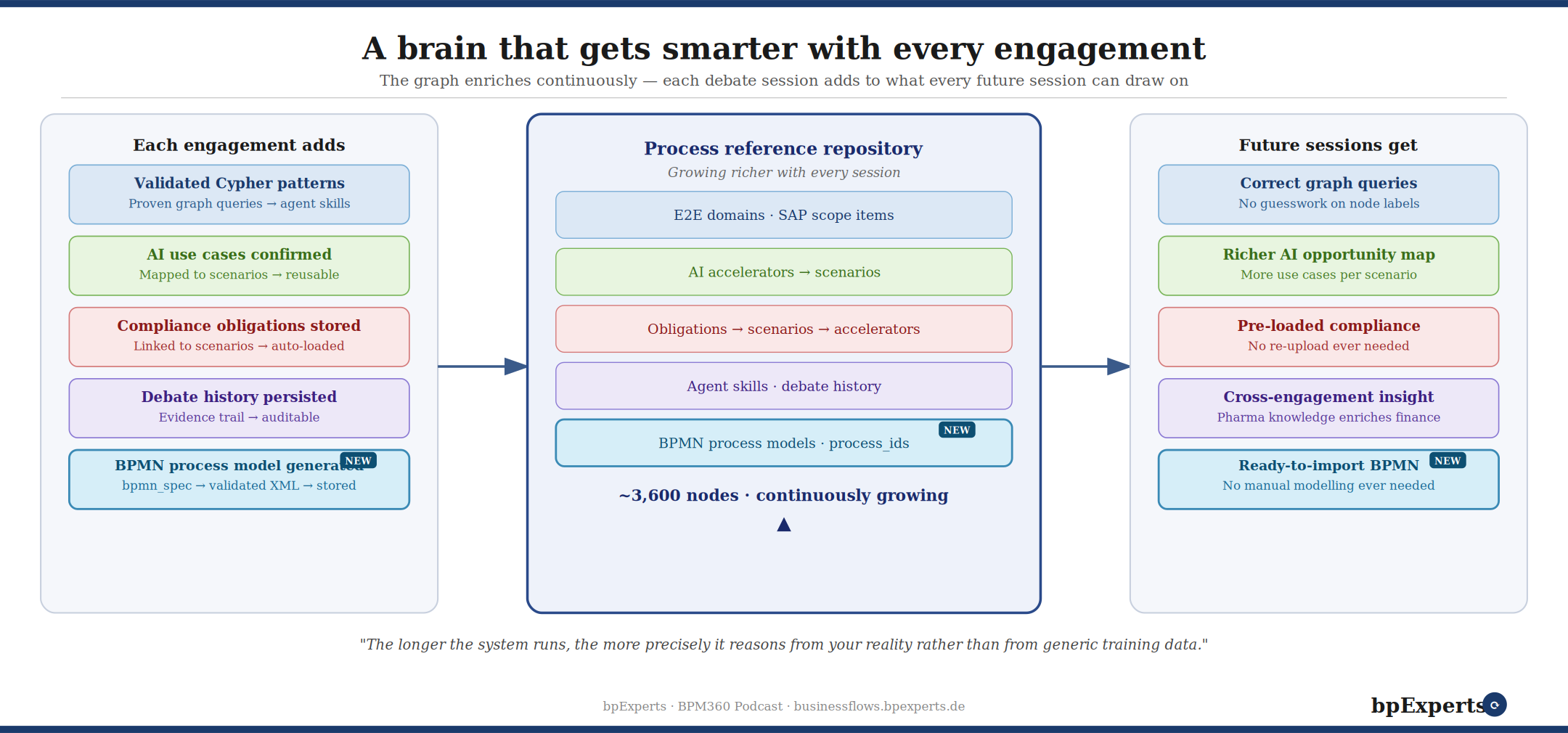

The updated engagement loop — BPMN process models are now generated automatically and stored back into the knowledge graph, making them available to every future session that touches the same scenarios.

What the Process Designer agent now emits

The original process designer translated a debate synthesis into a human-readable description of a BPMN process: swim lanes, tasks, gateways, flows, and compliance checkpoints. That description was accurate and useful — but it required a process modeller to convert it into an actual diagram.

The updated process designer emits exactly the same human-readable output, plus a new structured block called bpmn_spec. This is a machine-readable JSON object that follows an exact schema:

Every pool and lane is defined with a stable

snake_caseidentifierEvery task is typed:

serviceTaskfor AI agent steps,userTaskfor human decisionsEvery gateway is typed — exclusive or parallel — with documentation explaining the decision logic

Every sequence flow references its source and target by the IDs defined above, with named labels for gateway branches

Documentation strings are capped at 300 characters and written for the audit trail, not for the modeller

The schema is the contract between the debate pipeline and the BPMN Builder. When the bridge receives a valid bpmn_spec, it can construct the entire process model without any human interpretation. The process designer is the architect. The bridge and builder are the construction crew.

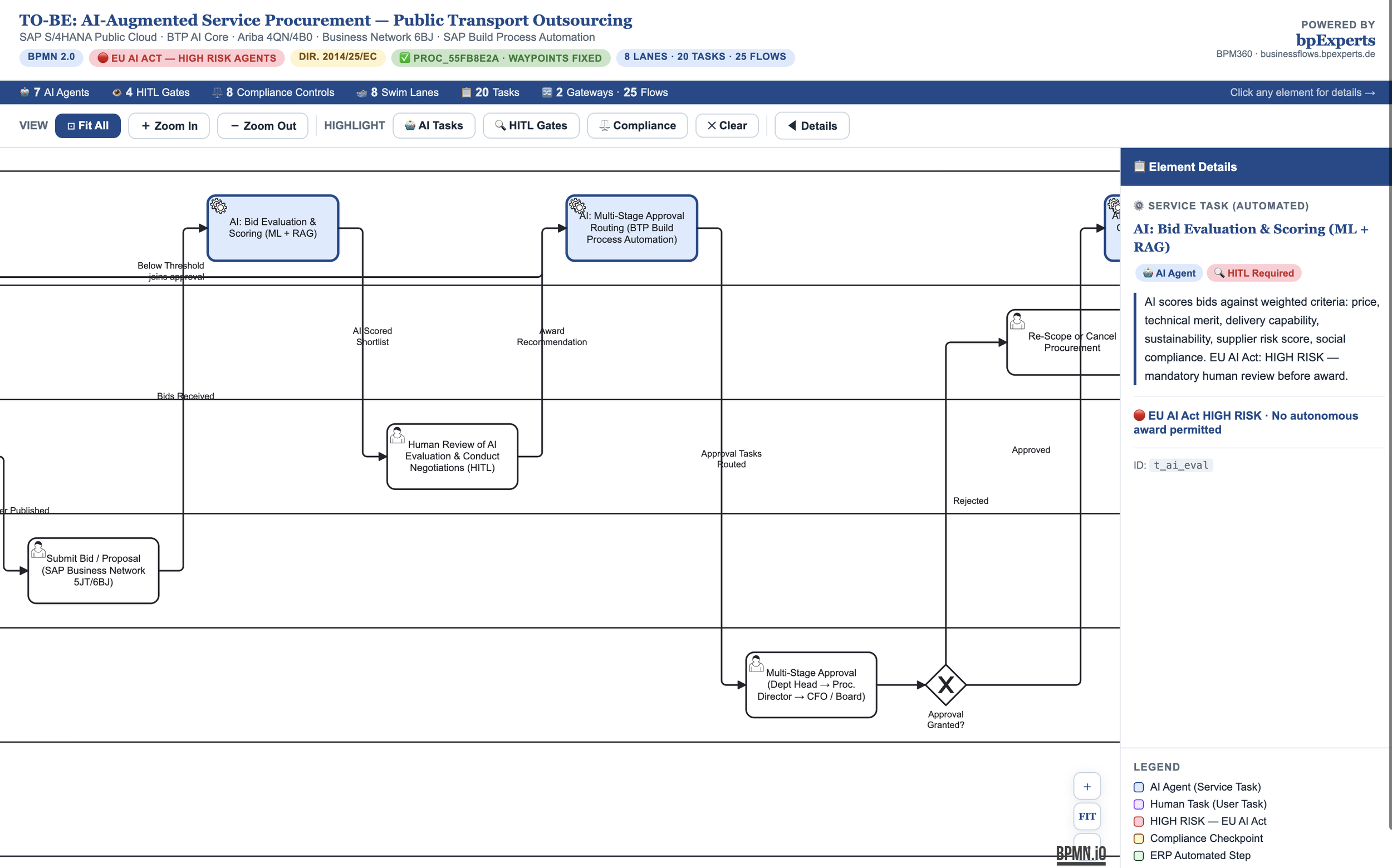

An example: AI-augmented service procurement for a public transport outsourcer

To validate the full pipeline, we ran a complete engagement on a real business problem.

A company that operates in outsourcing public transport regularly procures complex services. On SAP S/4HANA Public Cloud. Their purchase requisition process takes weeks — sometimes months. Complex services require an RFP and multiple approval stages before a contract can be negotiated. Only then can the PR and PO be created in SAP. The question: deploy AI and a workflow engine to transform this end-to-end.

The debate agents ran their full analysis against the Business Flows knowledge graph. The process analyst identified the primary E2E anchor — Service Procurement — and mapped it to seven SAP scope items across Ariba Sourcing (4QN), Ariba Contracts (4B0), Guided Buying (3EN), the Business Network (6BJ), and Fieldglass (22K). The innovator identified five high-value AI use cases, from intake classification (60–70% time reduction) to contract-to-PO automation (70–80% effort reduction, error rate below 2%). The compliance critic raised a HIGH-to-CRITICAL surface: EU Directive 2014/25/EC threshold rules, GDPR Article 22 automated decision requirements, EU AI Act Annex III high-risk classification for three of the five AI agents, and the Alcatel standstill obligation for above-threshold contract awards. The solution arbiter scored SAP standard against BTP AI extensions across eight capability dimensions.

The synthesis produced a recommendation — Strongly Recommended, conditional on compliance-first implementation — and a three-wave deployment roadmap.

The process designer then emitted a bpmn_spec covering eight swim lanes, twenty tasks, two gateways, and twenty-five sequence flows. The BPMN Bridge executed twenty-eight tool calls in the correct dependency order. The validator returned: valid: true, issues: [].

The result is the process diagram below.

The live process — interactive, embeddable, importable

The output of the pipeline is not a static image. It is a fully interactive BPMN 2.0 diagram rendered with bpmn.io, with the complete process logic, swim lane structure, and element documentation intact.

What you can do with this diagram directly:

Click any element to see its full documentation, compliance notes, and AI agent details

Use the 🤖 AI Tasks highlight to see all seven AI agents in one view

Use the 🔍 HITL Gates highlight to see every mandatory human-in-the-loop checkpoint — the ones that are there because the compliance critic made them a precondition of convergence

Use the ⚖️ Compliance highlight to see every regulatory control point embedded in the process

Export the underlying BPMN 2.0 XML for import into any standards-compliant modelling tool

The diagram is not a visualisation of a generic procurement process. Every element in it was placed there because the debate established it belonged there. The eight-stage approval routing structure reflects the EU Directive threshold matrix. The AI: Intake Classification task carries the HIGH RISK tag because the compliance critic flagged EU AI Act Annex III before the synthesizer would accept convergence. The Alcatel standstill task exists because the compliance critic cited the obligation and the solution arbiter confirmed it as non-negotiable regardless of solution choice.

The process was not designed and then checked for compliance. It was designed compliance-first, with the HITL gates and regulatory checkpoints as the skeleton from which everything else was built.

The presentation layer

The same engagement also produced a full corporate presentation — twenty-five slides in the bpExperts Century Gothic template — covering the client challenge, the Business Flows knowledge graph anchors, the five AI use cases, the solution architecture comparison, the process overview, the compliance map, the three-wave roadmap, and the final verdict.

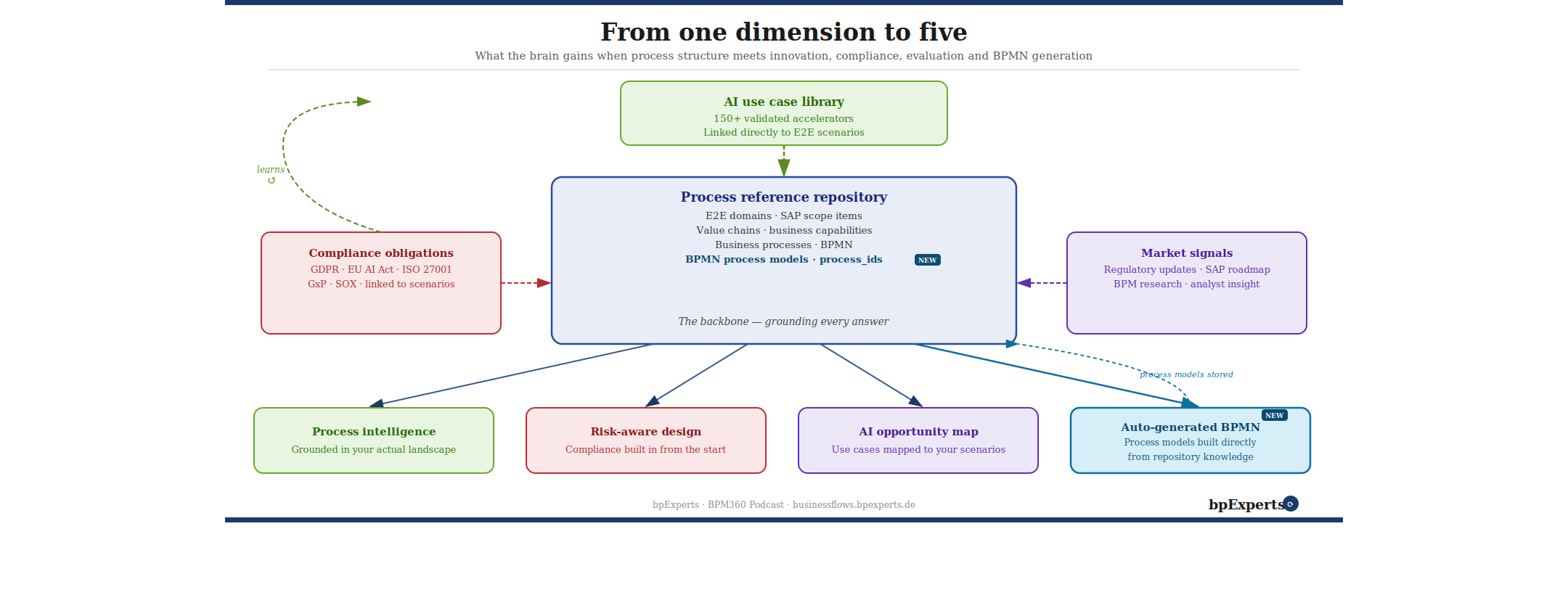

The system now produces five dimensions of output from a single debate engagement — including auto-generated BPMN that feeds back into the knowledge graph.

The presentation was not summarised from the debate. It was structured from it. The slide content maps directly to the agent positions: the compliance slide draws from the compliance critic's output, the ROI slide draws from the innovator's quantified use cases, the architecture comparison draws from the solution arbiter's scored matrix. Every claim in the deck has a traceable origin in the debate transcript.

This matters for two reasons. First, it is faster — a complete client-ready deck produced as a side effect of the analysis, not as a separate workstream. Second, it is more defensible — the deck does not contain claims that were not also argued and tested in the debate. The compliance critic that blocked three AI use cases pending GDPR controls is the same source as the compliance slide that lists those controls as prerequisites.

What continuous improvement looks like now

Each engagement now writes more back to the graph than before. In addition to the compliance documents, Cypher patterns, AI use cases, and debate history described in the previous article, the graph now also accumulates:

Generated BPMN process models, stored with their process_id and linked to the E2E scenarios they cover. When a future engagement touches the same scenarios — Service Procurement, or any of the seven scope items mapped in this one — the existing process model is available as a starting point. The next consultant does not start from a blank diagram. They start from a validated, compliance-embedded model that was the output of a structured debate.

bpmn_spec schemas, stored as reusable templates. The lane structure, task taxonomy, and gateway logic developed for complex-service procurement in a utilities context does not disappear after one engagement. It is available as a reference for the next one, with the compliance controls already modelled in.

The knowledge graph does not just get richer. It gets more specific — progressively more closely matched to the types of engagements that have actually been run through it, the compliance frameworks that have actually been argued, and the process structures that have actually been validated.

What this means for how process design work gets done

The traditional sequence in a BPM engagement is: analyse → workshop → model → review → validate → document. Each step is a handoff. Each handoff is a compression — something is lost in translation between the analysis and the workshop, between the workshop discussion and the model, between the model and the documentation.

The pipeline described here compresses that sequence differently. The analysis and the workshop happen inside the debate. The model is produced directly from the synthesis. The documentation is a side effect of the process designer's output. The review happens through the validator. The compliance checkpoint is embedded in the structure, not appended to it.

What remains for the human practitioner is the work that should never have been delegated in the first place: deciding whether the question was the right question, whether the synthesis reflects the political reality of the organisation, and what the recommendation means for the people whose work it will change.

The brain now produces the drawings. What it cannot produce is the judgement about whether the building should be built at all.